Your AI Forgot You Again And That Is Normal

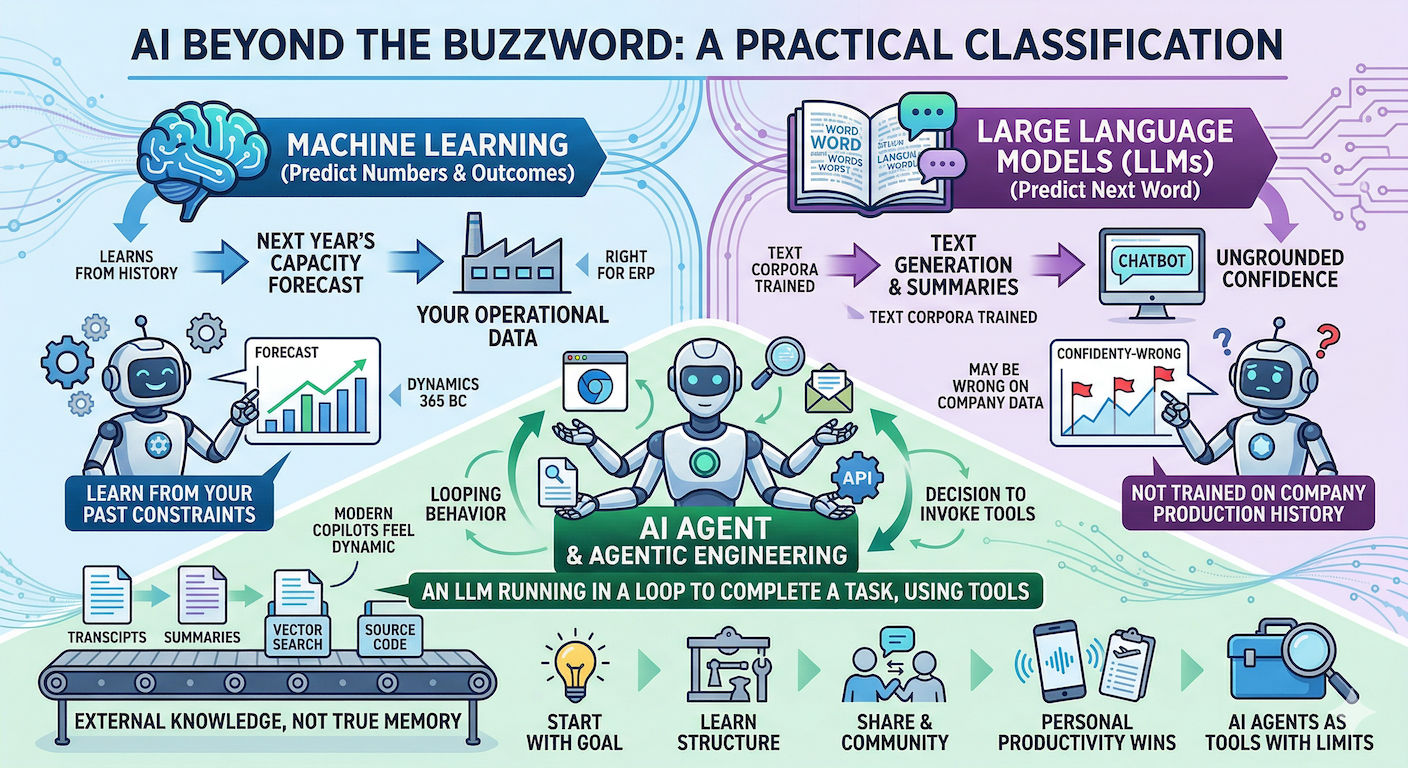

AI has become a catch-all term, which is why so many smart people still talk past each other. In this conversation, we separate “AI” into practical categories: machine learning models trained on your data to predict numbers or outcomes, and large language models (LLMs) trained on massive text corpora to predict the next word. That distinction matters for real work in Dynamics 365 Business Central and software development. If a manufacturer wants next year’s capacity forecast, an LLM can sound confident while being wrong, because it was never trained on that company’s production history. Machine learning is often the right fit because it can learn from your operational data, your items, and your past constraints.

Once LLMs entered everyday workflows, the industry shifted toward AI agents and agentic engineering. A useful technical definition is simple: an agent is an LLM running in a loop to complete a task while using tools. The “tools” are the bridge between text generation and action, like a scripted browser, a file search function, an email connector, or an API call. The LLM cannot click buttons or run code by itself, but it can decide when to invoke a tool, read the output, and iterate. That looping behavior is why modern copilots feel dynamic compared to early chatbots. It also explains why different frameworks (Cursor, GitHub Copilot, Claude, OpenAI tools) can produce different results on the same model: the orchestration, tool access, and context handling shape the outcome.

The biggest misconception is memory. Humans carry long-term memory and effectively “unlimited” context across days of work, but LLMs do not. They respond to what you send right now, within a limited context window, and then they are effectively gone until the next request. What people call “agent memory” is usually external knowledge: saved transcripts, summaries, vector search, pointers to files, or repositories the agent can re-open and re-read. Some tools summarize long chats to stay under token limits, but summarization can delete crucial nuance. A better pattern is offloading: store full conversation logs in files, keep a shorter running summary, and include references the agent can follow when it needs the original details. For AL development, giving an agent access to source code like the BC History repository, plus any relevant folders in your extension, often beats hoping it “remembers” how Business Central works.

The most practical takeaway is how to adopt AI without getting overwhelmed. Start with your goal, then learn just enough structure to complete that task: targeted courses, workshops, or curated playlists can build a mental model faster than random experimenting. After basics click, learning by doing becomes powerful. Sharing what you learn also pays off, especially in open source and community-driven ecosystems, because reputation and collaboration compound faster than hoarding tricks. The conversation also highlights personal productivity wins: building small agents to search Gmail and Outlook for visa travel records, and using voice mode for conference prep and language practice. As multimodal AI improves, the “interface” keeps changing, but the fundamentals remain: pick the right model type, provide the right context, and treat agents as tools with limits, not people with memory.